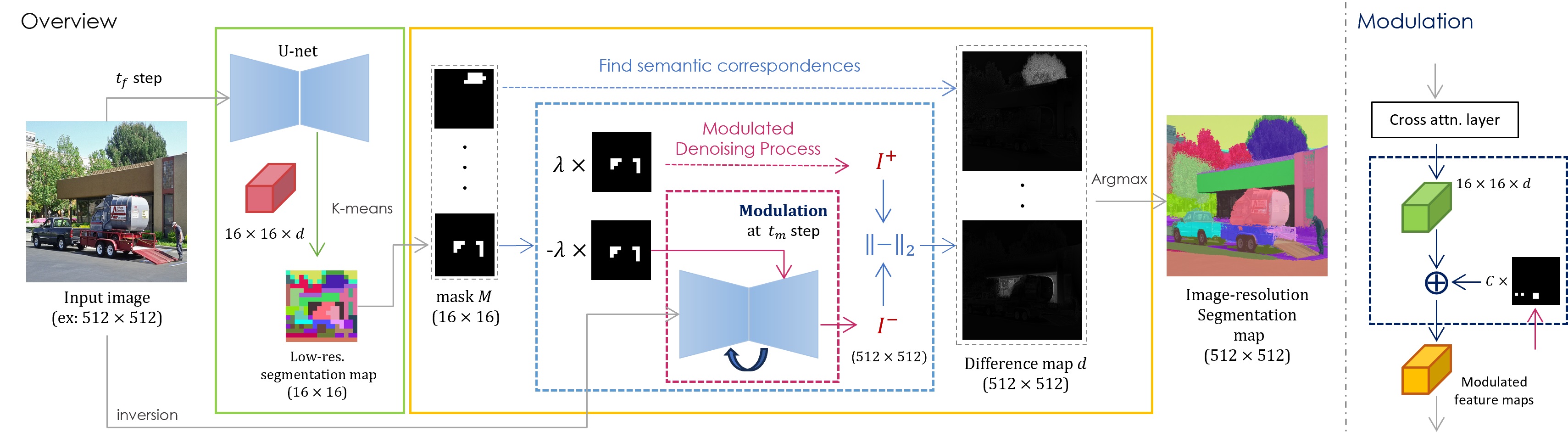

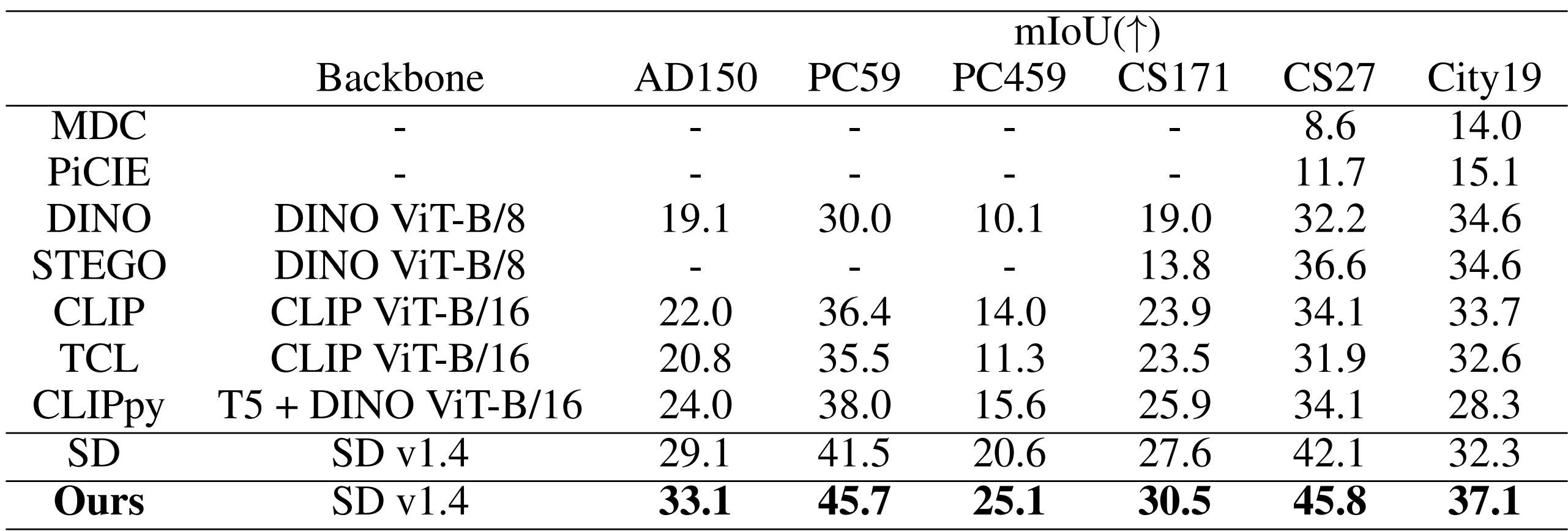

Diffusion models have recently received increasing research attention for their remarkable transfer abilities in semantic segmentation tasks. However, generating fine-grained segmentation masks with diffusion models often requires additional training on annotated datasets, leaving it unclear to what extent pre-trained diffusion models alone understand the semantic relations of their generated images. To address this question, we leverage the semantic knowledge extracted from Stable Diffusion (SD) and aim to develop an image segmentor capable of generating fine-grained segmentation maps without any additional training. The primary difficulty stems from the fact that semantically meaningful feature maps typically exist only in the spatially lower-dimensional layers, which poses a challenge in directly extracting pixel-level semantic relations from these feature maps. To overcome this issue, our framework identifies semantic correspondences between image pixels and spatial locations of low-dimensional feature maps by exploiting SD's generation process and utilizes them for constructing image-resolution segmentation maps. In extensive experiments, the produced segmentation maps are demonstrated to be well delineated and capture detailed parts of the images, indicating the existence of highly accurate pixel-level semantic knowledge in diffusion models.

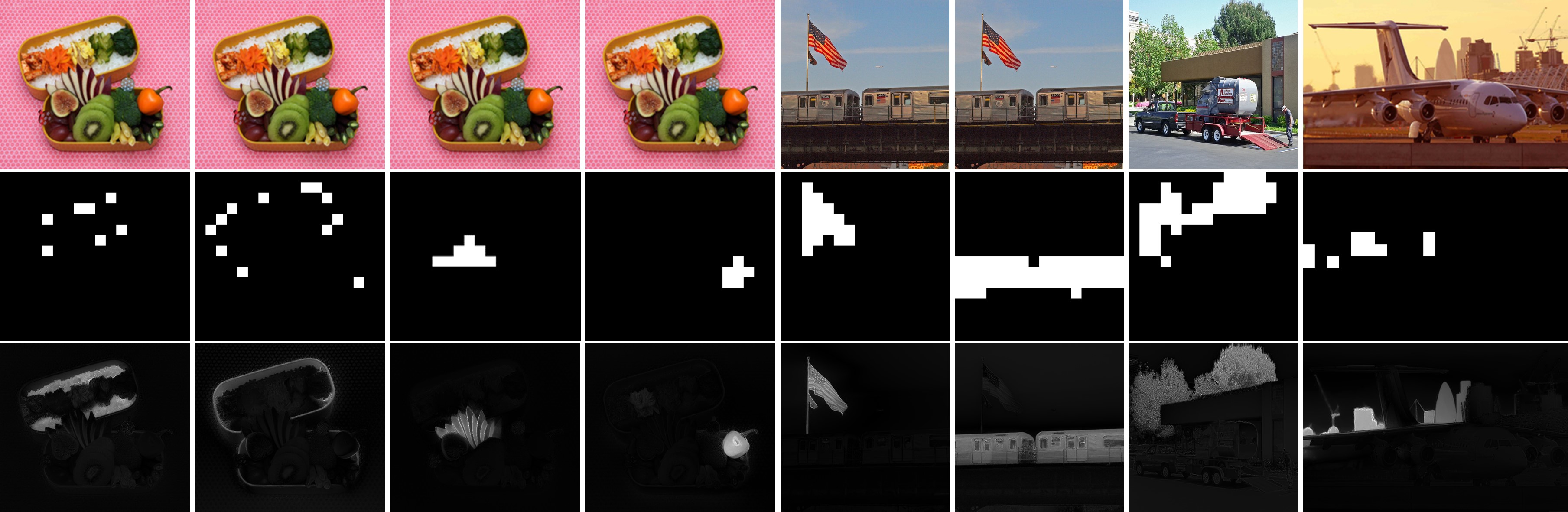

We begin by investigating how a local change in the values of low-resolution feature maps (e.g. 16×16) influences the pixel values of the generated images (e.g. 512×512). We discover that when we perturb the values of a sub-region of low-resolution feature maps (middle row of the figure below), the generated images are altered in a way that only the pixels semantically related to that sub-region are changed notably (bottom row of the figure).

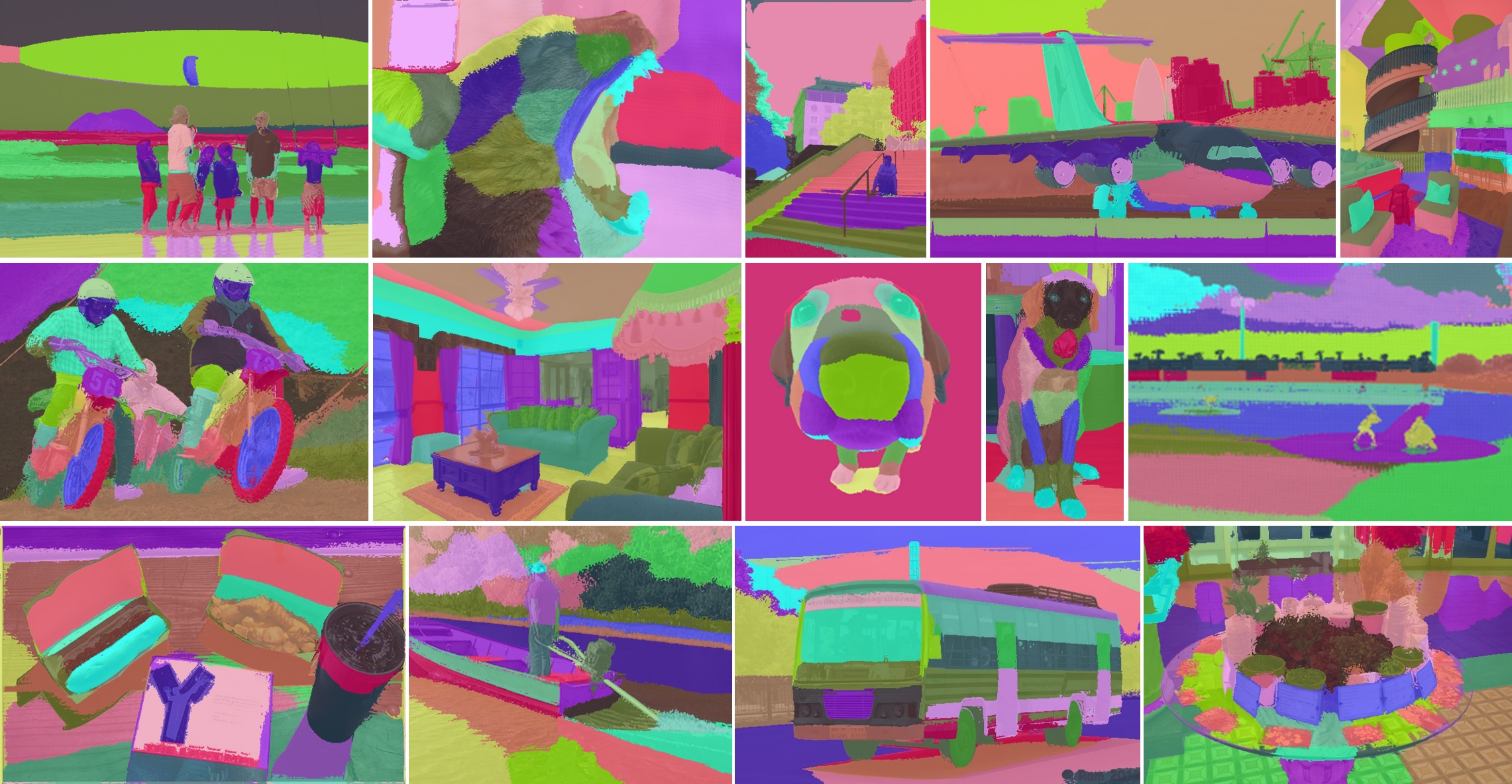

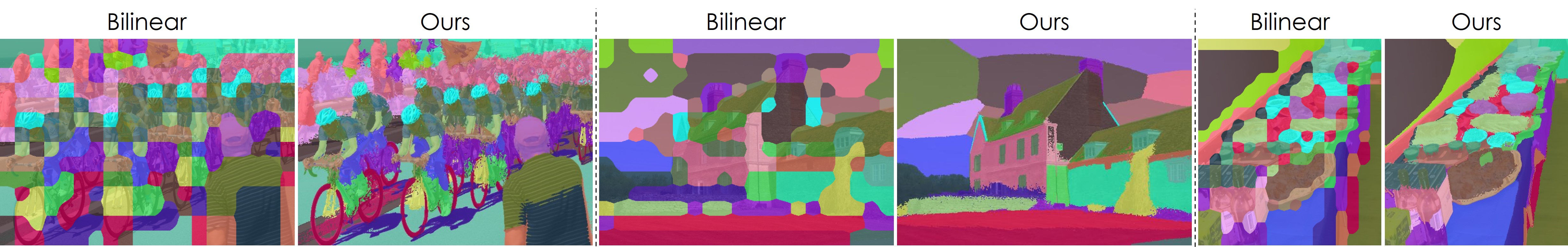

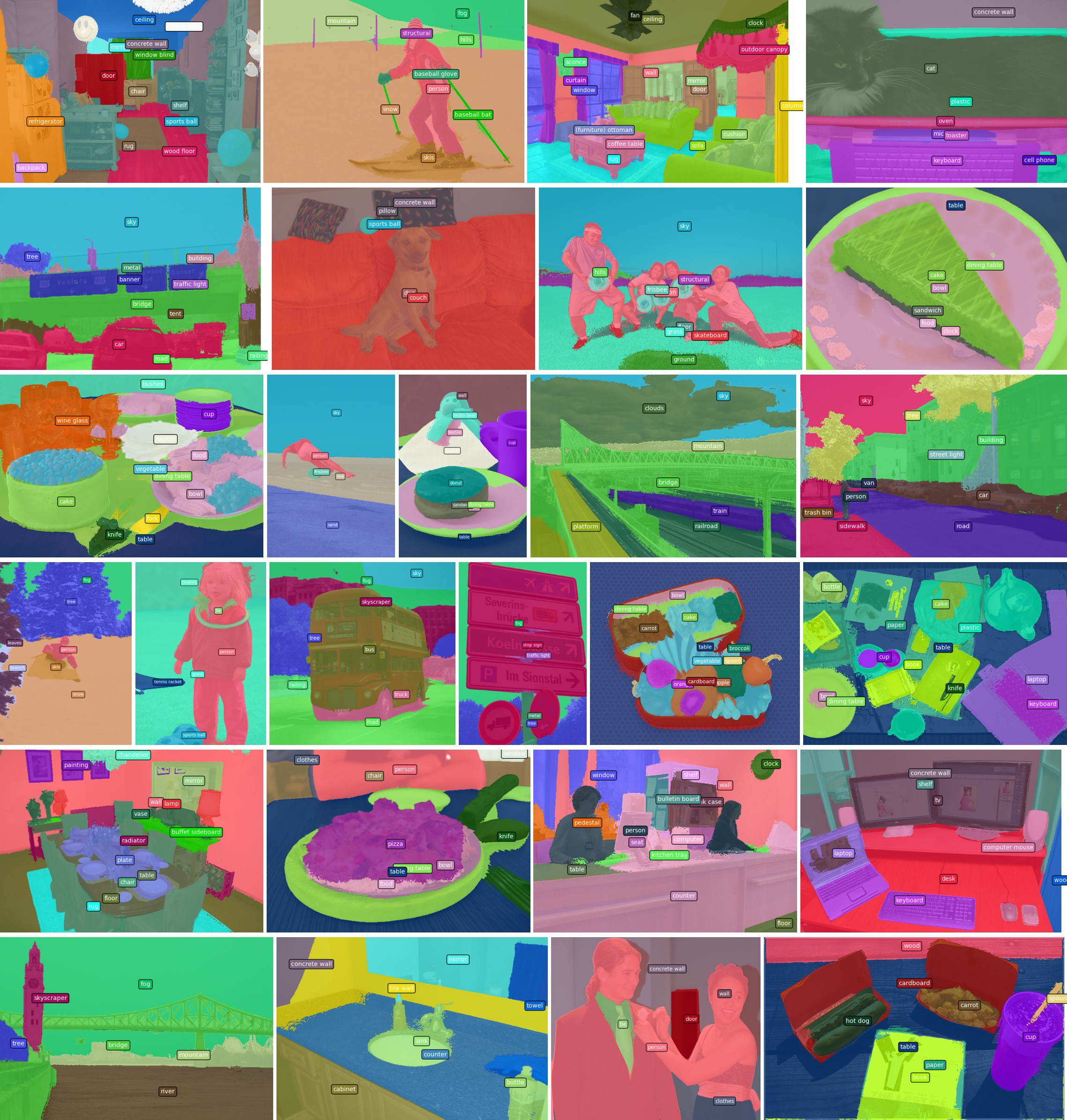

Qualitative comparison with naively upsampled low-resolution segmentation maps. Our segmentation maps are fine-grained and precisely capture detailed parts of the objects.

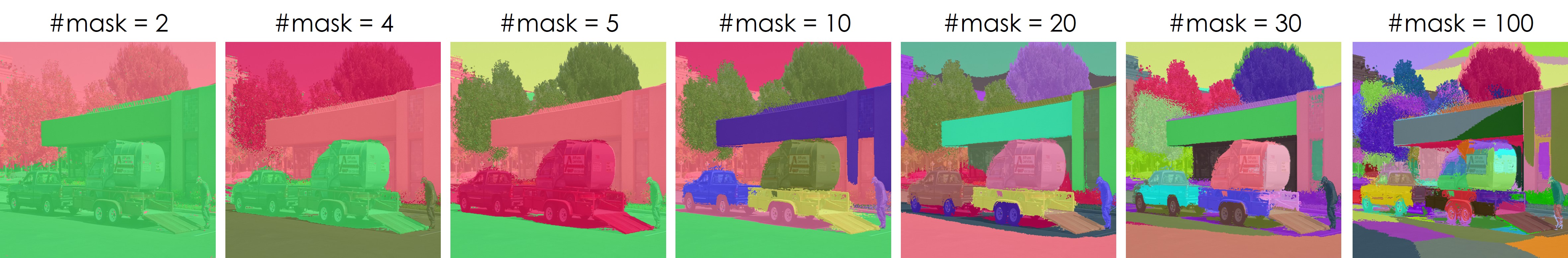

Qualitative comparison with naively upsampled low-resolution segmentation maps. Our segmentation maps are fine-grained and precisely capture detailed parts of the objects. Varying the number of segmentation masks. Our framework consistently groups objects in a semantically meaningful manner.

Varying the number of segmentation masks. Our framework consistently groups objects in a semantically meaningful manner.

@inproceedings{namekata2024emerdiff,

title={EmerDiff: Emerging Pixel-level Semantic Knowledge in Diffusion Models},

author={Koichi Namekata and Amirmojtaba Sabour and Sanja Fidler and Seung Wook Kim},

booktitle={The Twelfth International Conference on Learning Representations},

year={2024},

url={https://openreview.net/forum?id=YqyTXmF8Y2}

}